Hello, and welcome to the seventh post on Compassionate Nature. Psychological research uses standardised measures to obtain data to support theories, understand behaviours and assess effectiveness of interventions. Combining and comparing results across studies is a helpful way to gain more insight and support generalisation of what the data might be suggesting. What could go wrong ?

When building something, say a bookcase, it is really important to get the measurements right. You have to stick to the same measurement unit, as mixing up your millimetres and metres could result in a book case with rather long shelves. It is best to use a common form of measure, such as a tape measure with defined, standard values for consistency. And it’s best to check and double check your measurements to ensure meaningful results, in this case a well constructed bookcase.

The same principle applies to psychological (or for that matter all) research, otherwise how do you compare results across studies that use different measures? Except…well, although psychologists love a good measure, especially those who enjoy statistics and software like SPSS, it has been proposed that psychologists have a problem - the toothbrush problem.

“Psychological constructs and measures suffer from the toothbrush problem: no self-respecting psychologist wants to use anyone else’s.” Elson et al., (2023)

For a recent comment piece Elson et al. (2023) looked at how many times measures available from the APA Psych Test bank, the main database of psychological measures, have been used. They found that the majority of measures had been used very few times, some just once or twice. Only in the area of clinical psychology was there frequent re-use of the same measures, relating to mental health conditions such as depression and anxiety. There are many reasons for this happening - researchers can’t find a suitable measure for their specific study, the wording in a measure isn’t appropriate for a study sample (e.g. an adult measure needs to be modified for children or requires language translation) or the wording of a measure has become out of date or needs to be in a shorter format. And yes, there are also academic research “market forces” and publication recognition motivations which may lead researchers to create their own.

Another reason for creating or revising a measure is that the research is considering a specific psychological construct. Often the name of a measure doesn’t help with this, they can use similar terms but evaluate different constructs and you have to look carefully at what the items included in the measure are actually measuring, along with how the constructs are defined. The measure may also be unidimensional (e.g. measuring along a single continuum) or multidimensional (e.g. considering multiple factors).

This is as relevant within the psychological research of compassion and environmental psychology as it is within the wider scope that Elson et al. considered, so to illustrate the point let’s consider nature connection and pro-environmental behaviour.

Measuring nature connection.

Or is that connectedness to nature? Words matter as they define what we are talking about. In a review of studies relating to a nature connection measures, Häyrinen & Pynnönen (2020) highlighted three terms were widely used, with connectedness to nature/nature connectedness the most used, followed by connection to nature/nature connection and then nature relatedness. Overall the definitions associated to these terms cover the experiential sense of connection or relationship to the natural environment (or elements of nature) which may include one or all three psychological aspects of affect, cognition or behaviour. Some of the measures used in nature connection (I’ll stick with that term for this piece) are measuring one aspect (unidimensional ) or more than one (multidimensional). So it is not just semantics - how can you tell from a study title, abstract or summary what it’s actually measuring ? You can’t unless you read the whole paper, assuming you have access to it.

There are lots of measures relating to nature connection. Some are used more than others and some measures used in studies of nature connection perhaps reflect attitudes or behaviours towards nature more than connection. Measures that are more frequently used include Connectedness to Nature Scale (Mayer & Frantz, 2004), Inclusion in Nature Scale (Schultz, 2002) Nature Relatedness Scale (Nisbet et al., 2009) New Ecological Paradigm (Dunlap et al., 2000), Nature Connection Index (Richardson et al., 2019), Environmental Identity (Clayton 2003), while those perhaps less frequently used ones include Love and Care for Nature (Perkins, 2010), Disposition to Connect with Nature (Brügger et al., 2011), Attachment, Identity, Experiential Evaluation and Spiritual (AIMES) Connection to Nature (Meis-Harris et al., 2021). There are many more I haven’t mentioned, but hopefully these give you a flavour of the diverse names of measures relating to nature connection.

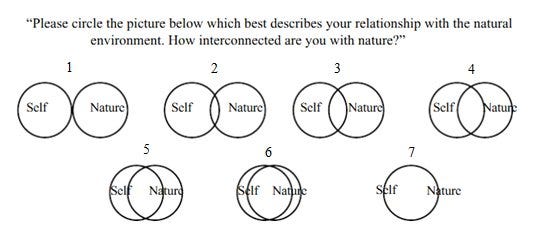

They all appear to measure nature connection or elements of it, with some also measuring other related constructs, such as pro-environmental behaviours. They employ different formats, although most consist of items presented in a statement or question format which the respondent indicates their agreement or disagreement with using a Likert scale. The Inclusion in Nature only has one item, presenting a series of Venn-like diagrams of two circles of Self and Nature, with the respondent choosing the one that represents how they see themselves.

The use of similar but different measures can pose problems to one of the key types of research, a meta-analysis, which often attract the attention of the media as they are seen as significant and powerful pieces of research. In a meta-analysis the researchers perform a literature review of published papers using various filters including key terms (e.g. variants of the words “nature connection”) and date range to extract a range of potential papers to review. This is whittled down through research criteria such as specific types of research (e.g. excluding pilot studies) and quality checks (e.g. level of experimental controls used) until a sub-set of studies is collected for the analysis review. This can include collating results together to perform statistical analysis on a large, pooled group to help determine effect sizes and significance of findings. The challenge is that the researchers have to be very clear on how selected studies can be compared and what specific constructs were being measured.

Does it really matter ?

It also affects how studies can be reported or interpreted outside of academia. Consider a very simple illustrative example using nature connection. A study used the Inclusion in Nature measure, which indicated that 70% of people self-reported as being one with nature, while another study using the Connectedness to Nature measure suggests that 80% self-report high levels of nature connection - does that mean a combined 75% across the two have high levels of nature connection? This is an unlikely example to happen given the obvious difference in the measures, I am labouring the point. They both can be referred to as measuring nature connection, but do so in very different ways - one is a graphical representation and one is a Likert format. And while that will be made clear in each study it may lead to poorly informed media reporting or interpretation by organisations where they don’t have the time, the access to the full paper or possibly don’t see a need to go into the detail. For them it may be enough to simply report lots of people feel connected to nature.

Measuring behaviour.

Deltomme et al., (2023) considered the question of consistency much more rigorously than my simple example with regard to measures of pro-environmental behaviour. The study used 13 well used self-report measures and 3 experimental tasks to assess whether they measured pro-environmental behaviour in the same way, referred to as convergent validity. The sub-sets of the measures and tasks were randomly presented using an online format to a sample of 340 Dutch undergraduate students (mean age of 19 years old, of whom 62% were male). In addition to assessing the measures the study also considered how respondents completed them and their experience in doing so. The results suggest that while each measure or experimental test had high internal consistency (which you would expect them to have) they had very low convergent validity - that is they were not measuring the same behavioural construct. The study results also suggested that the self-report measures were completed with impression management bias, where participants responses reflect a more positive presentation of themselves, with 74% also admitting to multitasking and not paying full attention while completing the longest of the measures. There are sample limitations which might influence the bias and experience results, however those limitations do not detract from the main point of the study which is that it appears the selected measures and tests do not provide comparable assessment of pro-environmental behaviour. This detracts from the ability to generalise results from studies where similar but actually different measures have been used and impacts the measures use when employed to determine effectiveness of interventions to increase pro- environmental behaviours.

And that’s why this matters - it could have implications outside of academic research, leading to misinformed public policies and funding decisions.

Questioning the question.

So, ensuring validity of measures is critical within psychological research so that they measure appropriately what the researchers intend them to, ensuring that results can be used to explore both theoretical perspectives and inform public discourse and policy. When developing a measure researchers will spend hours testing validity and reliability levels through samples and statistical analysis, removing items or revising items until acceptable thresholds of the two levels for the measure are reached. However as Elson et al. argue that process can result in reduced insight into the development of a measure with Satchell et al. (2021) suggesting that amongst the fall out from the so-called “replication crisis” within psychological research, validity discussions have perhaps not got the attention required. To illustrate this point they took several widely used measures of social media usage, which have been included in studies that have often reported levels of addictive usage, and replaced the social media references with wording relating to spending time with friends. The resulting Offline-Friend Addiction Questionnaire suggests that 69% of the 807 participants were addicted to time with their friends. While a piece of slightly “tongue in cheek” satirical research, it highlights that by changing just a few words in a self-report measure, everyday behaviours can be reported as problematic.

Next time you see a media report on a study, if you are able to, try and have a look at the study paper itself to see if the results have been represented well and did the authors actually measure what is being reported. I know that isn’t easy to do, it takes time, some prior knowledge, and often academic institutional access rights to publications (more open access papers please!). This is why I really appreciate blog posts by researchers themselves, who can provide an appropriate and accessible summary of their work.

As an aside, has anyone got a use for some 5 metre long by 10 cm wide bookcase shelves? Asking for a friend…

I would love to hear your thoughts on this topic (or any previous posts) so please feel free to add a comment, send an email to TheCompassionateNatureHub@gmail.com or add a reply if you see it via social media.

References

Note - I haven’t included the references for all of the example measures mentioned - if you would like a reference to a particular measure am happy to provide that on request.

Deltomme, B., Gorissen, K., & Weijters, B. (2023). Measuring pro-environmental behavior: Convergent validity, internal consistency, and respondent experience of existing instruments. Sustainability, 15(19), 14484. https://doi.org/10.3390/su151914484

Elson, M., Hussey, I., Alsalti, T., & Arslan, R. C. (2023). Psychological measures aren’t toothbrushes. Communications Psychology, 1(1), 25. https://doi.org/10.1038/s44271-023-00026-9

Satchell, L. P., Fido, D., Harper, C. A., Shaw, H., Davidson, B., Ellis, D. A., ... & Pavetich, M. (2021). Development of an Offline-Friend Addiction Questionnaire (O-FAQ): Are most people really social addicts? Behavior Research Methods, 53, 1097-1106 https://doi.org/10.3758/s13428-020-01462-9